12 Apr 2024

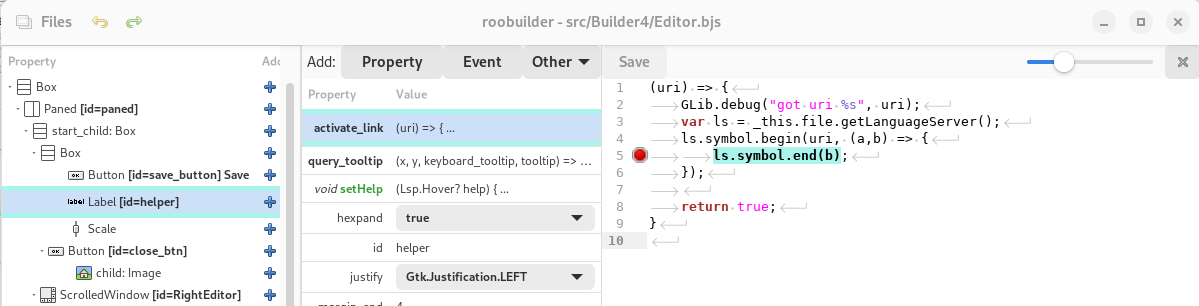

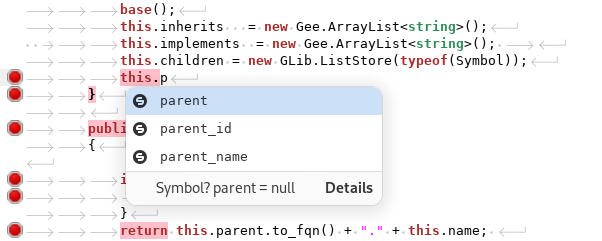

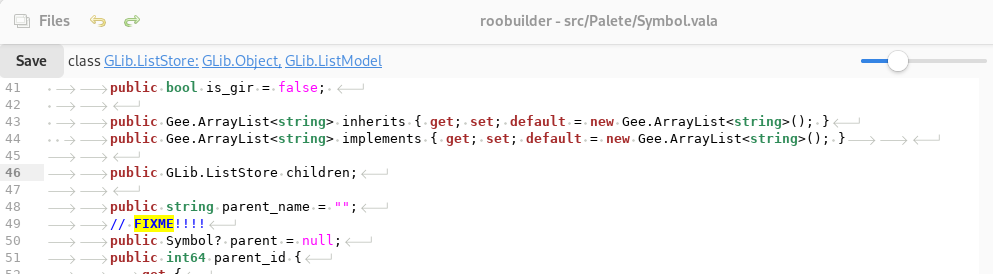

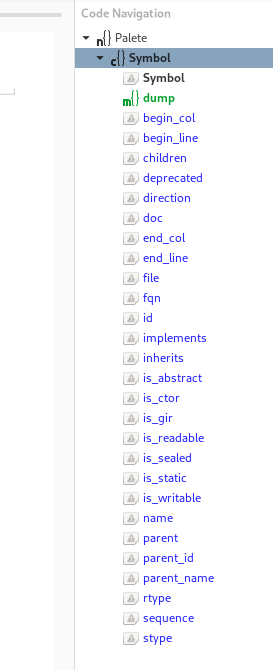

Some thoughts on the language server and its usefulness in the roobuilder

19 Nov 2023

Roo Builder for Gtk4 moving forward

Porting has been quite an effort. The initial phase of switching to gtk4 libraries and fixing all the compiler errors took a few months (this is very much a pet, part time project.. so things take a while).

After having reached the point where there were no compiler errors (although it still has plenty of warnings). The next step was to see if it ran.. which obviously it failed at badly.

Its been a long road, and it started with issues around how gtk4 windows are more deeply tied to the application class. Been so long since i fixed that, so ive forgotten the details. In the gtk3 version, although it had a application class, it did not really do that much.

More recently, though, I've been going through the interface migration. Key to this has been the migration away from gtktreeview, which seems to be unusable now for drag and drop of outside elements, along with being depricated. So i had to migrate all the code to use columnview with treemodels.

On the positive side the use of an array of objects as the storage for trees, and the new method to render cells is a massive improvement on gtk3. And works like magic with vala objects. Especially clever is the methods to update cell content. Which you can create get/set properties on the vala object. And any change to the property instantly updates label text

This makes the code that manages node tree, the core to the ui builder, massively simpler. No need to keep calling refresh or deal with tree iteraters like gtk3 treeview.

this.el.bind.connect( (listitem) => {

var lb = (Gtk.Label) ((Gtk.ListItem)listitem).get_child();

var item = (JsRender.NodeProp) ((Gtk.ListItem)listitem).get_item();

item.bind_property("to_display_name_prop", lb, "label", GLib.BindingFlags.SYNC_CREATE);

});

The method for sorting these view is also nothing short of magical, when you finally find the code example for sorting.. its very easy.. but hunting down a good sample was difficult.

this.el.set_sorter( new Gtk.StringSorter( new Gtk.PropertyExpression(typeof(JsRender.NodeProp), null, "name") ));

// along with this (in the sorter

this.el.set_sorter(new Gtk.TreeListRowSorter(_this.view.el.sorter));

The process had not been without difficulty though, the new widgets seriously lack the ability to convert click events into cell row/column detection. Essential for drag drop, The only way to do it is to iterate through the child and siblings and use math to calculate which row was selected. This made more complicated as the recent update to the widget changed the structure of the widgets. Breaking all row detection code. Note to self... don't complain.. send a patch...

(double x, double y, out string pos) {

// from https://discourse.gnome.org/t/gtk4-finding-a-row-data-on-gtkcolumnview/8465

GLib.debug("getRowAt");

var child = this.el.get_first_child();

Gtk.Allocation alloc = { 0, 0, 0, 0 };

var line_no = -1;

var reading_header = true;

var curr_y = 0;

var header_height = 0;

pos = "over";

while (child != null) {

//GLib.debug("Got %s", child.get_type().name());

if (reading_header) {

if (child.get_type().name() == "GtkColumnViewRowWidget") {

child.get_allocation(out alloc);

}

if (child.get_type().name() != "GtkColumnListView") {

child = child.get_next_sibling();

continue;

}

child = child.get_first_child();

header_height = alloc.y + alloc.height;

curr_y = header_height;

reading_header = false;

}

if (child.get_type().name() != "GtkColumnViewRowWidget") {

child = child.get_next_sibling();

continue;

}

line_no++;

child.get_allocation(out alloc);

//GLib.debug("got cell xy = %d,%d w,h= %d,%d", alloc.x, alloc.y, alloc.width, alloc.height);

if (y > curr_y && y <= header_height + alloc.height + alloc.y ) {

if (y > (header_height + alloc.y + (alloc.height * 0.8))) {

pos = "below";

} else if (y > (header_height + alloc.y + (alloc.height * 0.2))) {

pos = "over";

} else {

pos = "above";

}

GLib.debug("getRowAt return : %d, %s", line_no, pos);

return line_no;

}

curr_y = header_height + alloc.height + alloc.y;

if (curr_y > y) {

// return -1;

}

child = child.get_next_sibling();

}

return -1;

}

The other issue is that double clicking on the cells is a bit haphazard. Sometimes it triggers.. other times you feel like you are pressing a lift button multiple times hoping it will come faster.

https://gitlab.gnome.org/GNOME/gtk/-/issues4364

The other bug i managed to find was putting dropdowns on popovers. Don't do this it will hang the application. At present I've used a small column view to replace it.. but it needs a better solution as the interface is very confusing.

https://gitlab.gnome.org/GNOME/gtk/-/issues/5568

The other hill that I've yet to climb is context menus. Gtk4’s menu system is heavily focused on application menus. And they have dropped menuitems completely.

Context menus are usually closely related to the widget that triggers them, and sending a signal to the application, that then sends it back to the widget, seems very unnatural. For the time being I've ended up with popovers with buttons.. not perfect, but usable

I also had the opportunity to change the object tree adding for objects that ar properties of the parent.

Previously, adding a model to a columnview was done by using the add child + next to the columview item in the tree. It wouls show a list of potential child object, including ones that are properties.

Which is only working for the Roo Library so far

I've removed those from that object list now, and put them in the properties dialog, which then lists all the potential implementations of the property, if you expand it the list, and double click to add it to the tree

Anyway, now to work out some good demo videos

05 Mar 2020

Clustered Web Applications - Mysql and File replication

In mid-2018, one of our clients asked if we could improve the reliability of their web applications. The system was developed by us and was hosted on a single server in Hong Kong. Over the last 5 years or so, the server had been sporadically unavailable due to various reasons

- DDOS attack on the Hosting provider's network

- Hardware failure - both on the hosting machine and the provider's network hardware.

- Disk capacity issues

While most of these had been dealt with reasonably promptly, the service provided by our client to their customers had been down for periods up to a day. So we started the investigation into the solution to make this redundant and considerably more reliable.

Since this was not a financial institution, with endless money to throw at the problem, Amazon, Azure etc. were considered to pricey, and even if they did provide a more reliable solution, there was still a chance that it could still be susceptible to network or DDOS attacks. So the approach we took was to build a cluster of reasonably priced servers (both physical and virtual) hosted at multiple hosting providers.

This represented the starting point, we had already separated the Application and Mysql server into individual containers. Which made backups and restoration trivial, along with theoretically making the cluster implementation somewhat simpler

To implement a full clustering solution, not a redundancy solution, we needed to solve a few issues

- Mysql Clustering

- File system Clustering

- Load Balancing

- Private Networking between the various components.

The simplest of these was the Load balancing, we had already been using Cloudflare to provide free SSL (we tend to use letsencrypt on solutions these days, but Cloudflare has proved reasonably resilient. although it does still result in a single point of failure from our perspective)

The other two however proved to be more challenging than we expected.

Mysql Clustering

Anyone who has used MySQL, normally at some point set's up a master/slave backup system. It's pretty reliable, however, when it comes to switching from the master/slave, we concluded that the effort involved, especially considering the size of our database would be problematic. So we started testing out the Mysql Clustering technologies (note we tended to stick to classic MySQL technologies, rather than trying out any of the forks/offshoots).

After our initial analysis, we settled on NDB clustering, the setup of which proved more than a little problematic. In part due to the database restrictions that the storage engine enforced, but eventually having overcome the initial issues with this, by modifying our schemas slightly, we discovered that in our usage scenario, that NDB performance was significantly slower than that of a standalone InnoDB server. To the point where the application became un-usable. This may have been due to various factors, memory limitations, one of the machines using a physical rather than SSD drive. But after many hours of research and testing, we concluded that it was not a viable solution.

After throwing all that research in the bin, the next alternative was an InnoDB cluster. Again this involved quite a learning curve as management of the cluster is done via mysqlsh, which due to the nature of the internet has a wealth of out of date contradicting information all over the internet. Along with rather limited precise information on working configurations. Eventually, we managed to solve both the multitude of configuration settings (enough memory allocated to migrate) and minor schema modifications to enable replication to work. Resulting in the first part of the puzzle being solved.

The final solution for the mysql server involved hosting on 1 physical machine, one virtual machine in Hong Kong and a Linode VPS in Singapore. This has generally met the initial goals of more stability, however, we do have a long term plan to move more to Linode, and remove the Hong Kong physical hardware, as this seems to be our most frequent point of failure. Saying that the machine and network have failed multiple times, but the services have remained up throughout.

In addition to the servers, we also added mysqlrouter to the mix, in the initial design it's running on the same container as the mysql server. in hindsight, it would have been better to have a separate container for this, and the next phase the mysql servers will be hosted on seperate VPS's, and the mysqlrouter container will be running on the application server VPS's.

File Replication

We did some quite extensive testing of clustered file systems, including getting the application up and running on gluster. This again however proved to be a performance issue, and we found that gluster killed both CPU and memory usage.

Eventually, we settled on a multi-pronged approach, the first being unison for two way synchronization. The second being splitting the file system into 'active areas' and archive areas. Our applications generally create files in directories based on YYYY/mm/dd - so a simple script was written to move directories older than a few days from the 'hot' storage area which was replicated using unison (based on inotify watches) and a 'cold' area, that was kept in sync daily using rsync. Softlinks were then created the hot file areas to point to the correct place in the cold storage.

This meant we could handle quite a bit of file activity as one of the applications is constantly creating files, and have those files available on multiple servers. For the next phase of development, we will be running unison in multiple containers for each pair of replication targets. And also considering NFS servers over TCP rather than replication for our main two front end servers.

Private Networking

One of the early issues before we set this all up was to work out how all these different servers would communicate, securely with each other. Normally for private networking, we had used OpenVPN. This is a client-server spoke system, however for a reliable network we would not want to have a single point of failure, and writing scripts to flip between different OpenVPN servers if something failed seemed rather messy.

To solve this we came across tinc, which solved our redundancy problem brilliantly. Tinc is a mesh VPN, which, in theory, can route around broken connections, so with servers A,B,C - if the line is down between C&A then it will route via B. It, as we found later does not handle a 'poor' (dropped packets) connection between C&A very well. You also have to make sure all the firewalls are correctly configured as if you incorrectly configure access to 'C&B', in that 'C&B' can see A, but A can connect directly to C&B, the network will work, however, will fall apart as soon as C goes down. It's a real, cross the t's and dot the I's network, get it correct otherwise when it fails you will be hunting down the issue for a while.

This is a map of the current configuration

03 Jan 2019

GitLive - Branching - Merging

28 Oct 2016

PDO_DataObject Released

#pear channel-discover roojs.github.com/pear-channel

#pear install roojs/PDO_DataObject-0.0.1

Documentation

17 Aug 2016

PDO_DataObject is under way

- General Compatibility to DB_DataObject with a few exceptions - methods relating to PEAR::DB have been removed, and replaced with PDO calls

- New simpler configuration methods, with some error checking

- A complete test suite - which we will apply to DB_DataObject to ensure compatibility

- Chaining for most methods so this works

- Exceptions by default (PEAR is an optional dependency - not required)

- It should be FAST!!! - standard operations should require ZERO other classes - so no loading up a complex set of support classes. (odd or exotic features will be moved to secondary classes)

19 Nov 2015

Mass email Marketing and anti-spam - some of the how-to..

I'm sure I've mentioned on this blog (probably a few years ago), that we spent about a year developing a very good anti-spam tool. The basis of which was using a huge number of mysql stored procedures to process email as it is accepted and forwarded using an exim mail server.

The tricks that it uses are numerous, although generally come from best practices these days.

The whole process starts off with creating a database with

- 'known' servers it has talked to before

- 'known' domains it has dealt with before.

- 'known' email address it has dealt with before.

If an email / server / domain combo is new and not seen before, then apart from greylisting, and delaying the socket connections we also have a optional manual approval process. (using the web client).

Moving on from that we have a number of other tricks, usually involving detecting url links in the email and seeing if any of the email messages that have been greylisted (with different 'from') are also using that url.

On top of this, is a Web User interface to manage the flow and approvals of email. You can see what is in the greylist queue, set up different accounts for different levels of protection (either post-delivery approval, or pre-delivery approval etc..)

This whole system is very effective, when set up correctly. It can produce zero false negatives, and after learning for a while, is pretty transparent to the operations of a company. (email me if you want to get a quote for it, it's not that expensive...)

So after having created the best of breed anti-spam system, in typical fashion, we get asked to solve the other end.. getting large amounts emails delivered to mailing lists.

If you are looking for help with your mass email marketing systems, don't hesitate to contact us sales@roojs.com

Read on to find out how we send out far to many emails (legally and efficiently)

16 Nov 2015

Hydra - Recruitment done right

20 May 2015

More on syntax checking vala - and a nice video

09 May 2015

Fetching Resources from github in the App Builder and fake web servers

Follow us

Follow us

-

- Some thoughts on the language server and its usefulness in the roobuilder

- Roo Builder for Gtk4 moving forward

- Clustered Web Applications - Mysql and File replication

- GitLive - Branching - Merging

- PDO_DataObject Released

- PDO_DataObject is under way

- Mass email Marketing and anti-spam - some of the how-to..

- Hydra - Recruitment done right

Blog Latest

-

Twitter - @Roojs